Over the past two years, artificial intelligence has shifted from experimental to existential for enterprises. The release of powerful Large Language Models (LLMs) triggered one of the fastest technology adoption cycles in business history. According to McKinsey’s Global AI Survey, over 50% of organizations report adopting AI in at least one business function, with generative AI investments accelerating rapidly. Gartner similarly predicts that AI will be embedded in the majority of enterprise software platforms within the next few years.

Across industries, sustainability leaders are being asked:

“How are we using AI in ESG?”

“Can AI automate reporting?”

“Can we deploy large language models for disclosures?”

“Are we leveraging generative AI to stay ahead?”

Bigger models mean better intelligence.

If LLMs can write essays, summarize books, and pass professional exams, surely they can handle ESG reporting, regulatory mapping, and sustainability disclosures but this is where the hype collides with reality.

ESG is not a general knowledge problem. It is a structured, domain-heavy, compliance-critical problem.

ESG reporting involves:

Framework-specific disclosures (CSRD, ISSB, GRI, BRSR, CDP)

Scope 1, 2, and complex Scope 3 emissions calculations

Double materiality assessments

Audit-traceable data trails

Regulatory liability

In this environment, creativity is less valuable than consistency. Fluency is less important than traceability. And scale means little without precision.

The real question ESG leaders should be asking is not:

“How large is the model?”

But: “Is this AI designed for the structure, accountability, and governance requirements of ESG?”

That distinction changes everything.

The Unique Nature of ESG Data

To understand why AI strategy in ESG must be different, we need to understand the nature of ESG data itself.

Unlike traditional enterprise data, ESG information sits at the intersection of regulation, operations, finance, and risk. It is not just descriptive, it is declarative. It makes claims and those claims can be audited.

Consider what ESG teams actually manage:

Scope 1, 2, and especially complex Scope 3 emissions calculations

Supplier-level activity data across geographies

Double materiality assessments linking financial risk and environmental impact

Cross-framework reporting (CSRD, ISSB, GRI, BRSR, CDP)

Evidence-backed disclosures subject to third-party assurance

This is not unstructured internet text. It is structured, framework-bound, traceable data.

ESG Is Framework-Constrained

Each sustainability framework has its own logic, terminology, and disclosure architecture. A single data point say, energy consumption may need to be:

Categorized differently under GRI vs. ISSB

Linked to financial materiality under CSRD

Mapped to risk disclosures in annual filings

Connected to emissions factors for GHG accounting

The intelligence required here is not generative fluency. It is controlled mapping, rule-based alignment, and contextual precision.

ESG Is Audit-Sensitive

Increasingly, ESG disclosures are subject to limited or reasonable assurance. Under regulations like CSRD, companies face legal accountability for inaccurate reporting. Investors, regulators, and rating agencies scrutinize inconsistencies.

In this context:

Hallucinations are not harmless errors.

Inconsistencies are not cosmetic flaws.

Ambiguity creates regulatory risk.

Accuracy, traceability, and explainability become non-negotiable.

ESG Is Domain-Heavy

Emissions accounting alone requires understanding:

Activity data vs. spend-based calculations

Emission factors and regional adjustments

Boundary setting and consolidation approaches

Category-specific Scope 3 methodologies

What Are Small Language Models and Why They Fit ESG Better

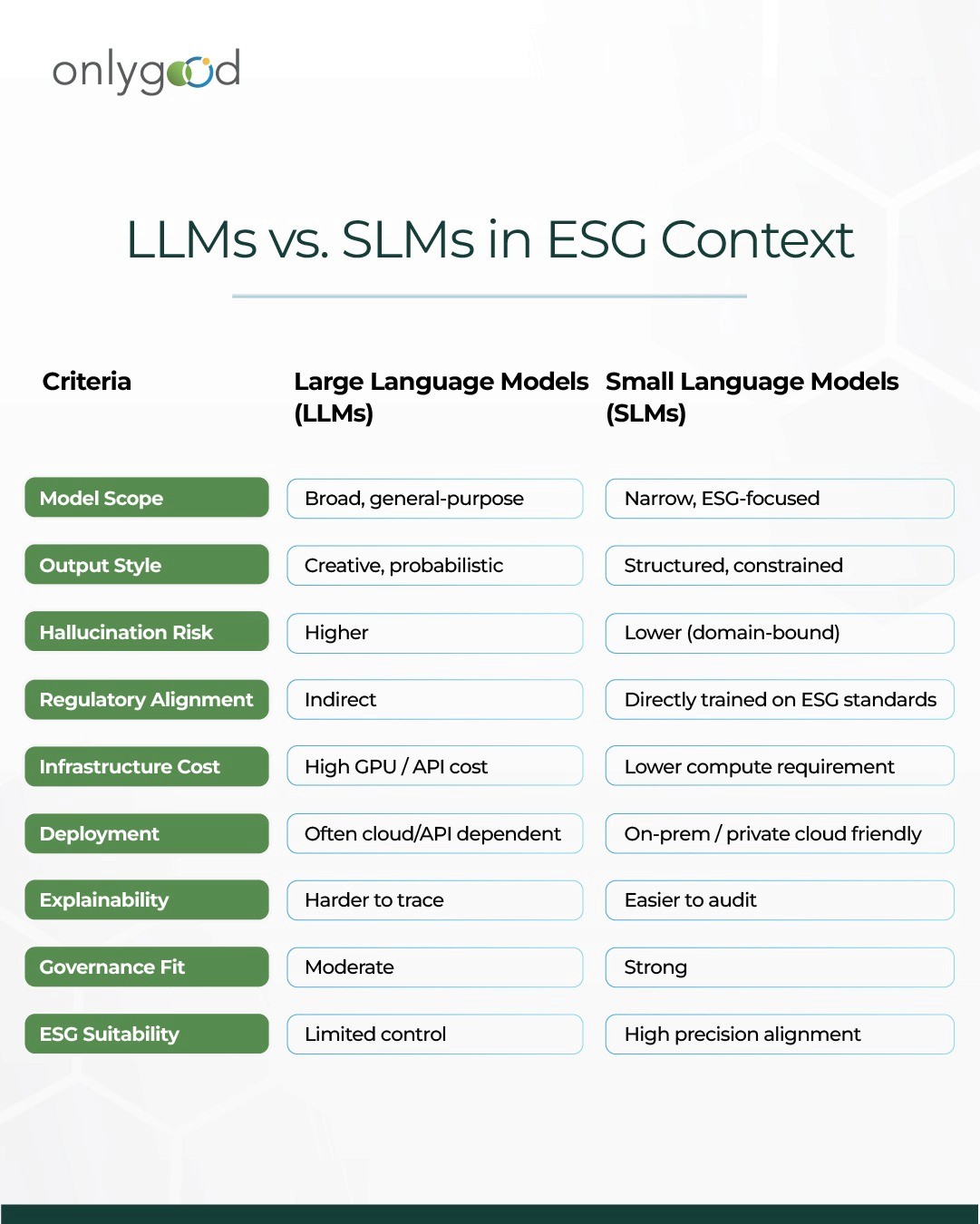

If Large Language Models are designed to know a little about everything, Small Language Models (SLMs) are designed to know a lot about one thing.

A Small Language Model is typically:

Trained or fine-tuned on a narrow, domain-specific dataset

Built with fewer parameters than frontier LLMs

Optimized for precision over generality

Integrated within structured workflows rather than open-ended chat

But “small” does not mean weak.

It means focused.

The Architectural Difference

Large models are optimized for:

Open-ended reasoning

Creative generation

Broad domain coverage

Conversational flexibility

Small models are optimized for:

Repetitive, high-accuracy tasks

Rule-bound decision logic

Controlled outputs

Lower compute requirements

Easier deployment in private environments

In ESG, that difference matters.

Because ESG tasks are rarely open-ended. They are constrained.

You are not asking: “Write me an imaginative story about climate change.”

You are asking:

“Map this disclosure to CSRD ESRS E1.”

“Classify this supplier under Scope 3 Category 1 or 4.”

“Flag inconsistencies between energy data and reported emissions.”

“Check alignment between narrative disclosure and numeric tables.”

These are not creative prompts. They are structured logic problems.

Why Specialization Outperforms Scale in ESG

A purpose-built SLM can be:

Fine-tuned on GHG Protocol methodologies

Constrained by ESRS disclosure logic

Embedded with predefined emission factor libraries

Guardrailed to prevent unsupported claims

Designed to output structured JSON instead of paragraphs

This dramatically reduces:

Hallucination risk

Inconsistent mapping

Overly verbose or speculative responses

Compliance exposure

The Strategic Shift

For ESG organizations, the goal is not to deploy the largest AI model available.

It is to deploy the most relevant model possible.

Small Language Models represent a shift from:

General intelligence → Domain intelligence

Conversational AI → Workflow AI

Experimentation → Governance

Hype → Reliability

And in compliance-critical environments, reliability wins.

Why Large Models Struggle in ESG Environments

Large Language Models are remarkable general-purpose systems. But their strengths are not always aligned with the realities of ESG operations.

In sustainability reporting, precision is not optional. It is regulatory.

Here are the structural reasons why deploying large, general-purpose models in ESG can introduce risk rather than resilience.

1. Hallucination Risk in Compliance Contexts

LLMs are probabilistic systems. They generate responses based on patterns in data not verified facts.

In marketing, a slightly embellished paragraph may go unnoticed. In ESG disclosures, a fabricated reference, incorrect metric, or misaligned framework mapping can lead to:

Audit qualifications

Investor mistrust

Regulatory scrutiny

Legal exposure

When disclosures are subject to third-party assurance under regulations like CSRD, explainability and source traceability are mandatory. A model that cannot clearly justify its output creates governance risk.

2. Framework Mapping Inconsistencies

Large models are trained on vast, heterogeneous data. ESG frameworks, however, are highly specific and frequently updated.

For example:

ESRS terminology differs from GRI definitions.

ISSB emphasizes investor materiality, while CSRD requires double materiality.

Scope 3 categories must follow GHG Protocol classification rules precisely.

A general-purpose model may “understand” sustainability broadly but struggle with consistent, rule-bound mapping across frameworks especially when terminology overlaps but definitions differ.

In ESG, near-correct is still incorrect.

3. Limited Explainability for Audits

Auditors increasingly expect:

Data lineage

Calculation transparency

Clear methodology documentation

Large black-box models make it difficult to explain:

Why a classification decision was made

How a materiality tag was assigned

What rule triggered a disclosure recommendation

If the AI output cannot be traced back to structured logic or predefined rules, it weakens assurance readiness.

4. Data Privacy and Governance Concerns

ESG data often includes:

Supplier-level operational information

Financial performance indicators

Energy consumption and production data

Internal risk assessments

Sending sensitive sustainability data to external, large-scale AI systems can raise:

Confidentiality concerns

Cross-border data transfer issues

Governance and cybersecurity risks

For many enterprises, especially in regulated sectors, AI deployment must align with strict internal data policies.

5. Cost vs. Use-Case Mismatch

Frontier LLMs are computationally expensive. They are designed for broad reasoning tasks that exceed the needs of most ESG workflows.

Using a massive model to classify supplier emissions or validate structured data is often like using a supercomputer to run a spreadsheet.

The cost-to-value ratio becomes misaligned.

The Core Insight

Large models are optimized for breadth and creativity.

ESG requires constraint and consistency.

When sustainability leaders adopt AI purely based on model size or brand reputation, they risk introducing volatility into systems that demand stability.

In ESG, the margin for error is narrow.

And that is precisely why the AI architecture decision matters more than the AI trend.

Where Small Language Models Win: Practical ESG Use Cases

If Large Language Models promise breadth, Small Language Models deliver control.

In ESG environments, that control translates directly into reliability, audit confidence, and operational efficiency. Below are practical areas where domain-specific SLMs create measurable advantage.

1. Framework Mapping and Cross-Standard Alignment

One of the biggest pain points for ESG teams is translating a single dataset across multiple frameworks:

CSRD (ESRS)

GRI

ISSB

BRSR

CDP

Each framework asks similar but not identical questions. Terminology overlaps. Definitions vary. Disclosure granularity differs.

A domain-trained SLM can:

Map disclosures across frameworks using predefined logic

Identify missing fields based on structured requirements

Flag inconsistencies between narrative and numeric data

Maintain version control as standards evolve

Instead of manually reconciling spreadsheets, ESG teams get rule-based mapping automation.

2. Scope 3 Emissions Classification

Scope 3 remains the most complex component of GHG accounting. It involves:

15 categories under the GHG Protocol

Supplier-level activity data

Spend-based vs. activity-based methodologies

Upstream and downstream differentiation

An ESG-focused SLM can:

Classify supplier transactions into correct Scope 3 categories

Detect anomalies in emission factors

Suggest appropriate calculation methodologies

Validate boundary definitions

Because the model is trained specifically on GHG logic, it operates within structured guardrails rather than guessing from broad sustainability language.

3. Double Materiality Screening

Under CSRD, organizations must assess both:

Impact materiality (impact on environment and society)

Financial materiality (impact on enterprise value)

This requires systematic tagging of risks, opportunities, and stakeholder concerns.

A specialized SLM can:

Categorize risks according to ESRS taxonomy

Link sustainability topics to financial exposure categories

Ensure consistency across disclosures

Generate structured documentation for audit review

Instead of free-form narrative generation, the output becomes structured and traceable.

4. ESG Data Validation and Anomaly Detection

ESG reporting often involves consolidating data from:

ERP systems

Supplier submissions

Energy bills

Production systems

Manual uploads

Errors creep in through inconsistent units, missing values, or misaligned emission factors.

An SLM embedded within the data workflow can:

Detect numerical inconsistencies

Cross-check reported emissions against activity data

Flag improbable year-over-year shifts

Validate completeness against framework requirements

This shifts AI from “content generator” to “data integrity guardian.”

5. Controlled Drafting with Guardrails

There is still a role for language generation in ESG but it must be constrained.

A small, domain-tuned model can:

Draft CDP or EcoVadis responses within predefined templates

Ensure language aligns with actual data

Prevent unsupported claims or greenwashing language

Maintain consistency across sections

The key difference is guardrails.

The model operates within:

Structured data inputs

Pre-approved language frameworks

Compliance logic

Version-controlled disclosures

That reduces reputational and regulatory risk.

The Practical Advantage

In each of these cases, the advantage of Small Language Models is not just technical, it is strategic:

Lower hallucination risk

Easier audit explainability

Better data governance

Reduced infrastructure cost

Faster deployment within enterprise systems

They are not trying to simulate general intelligence.

They are engineered to solve ESG’s specific problems.

Risk, Compliance & Trust: Why Smaller Is Safer in ESG

If there is one area where AI decisions carry real-world consequences, it is ESG.

Unlike marketing or content automation, ESG outputs are:

Submitted to regulators

Shared with investors

Used in audits

Linked to financing and penalties

An AI hallucination in a blog post is embarrassing.

An AI hallucination in a carbon disclosure or compliance filing is a liability.

In ESG, trust matters more than novelty and smaller, domain-trained models are inherently more controllable.

ESG Is a High-Stakes Environment

Frameworks such as:

Global Reporting Initiative (GRI)

Carbon Disclosure Project (CDP)

European Commission under CSRD

Securities and Exchange Commission (SEC climate rules)

require:

Precise terminology

Traceable data

Consistent methodology

Audit-ready documentation

This is not open-ended creativity.

This is structured accountability.

Large general-purpose models are optimized for linguistic fluency not regulatory precision.

Hallucination Risk Is Not Theoretical

LLMs are probabilistic systems. They generate the most statistically likely next token not necessarily the most accurate statement.

In ESG contexts, this can lead to:

Incorrect emission factors

Fabricated framework references

Misinterpretation of scope boundaries

Confident but wrong compliance statements

A small language model trained on:

Approved emission factor libraries

Specific regulatory texts

Structured ESG taxonomies

Internal methodology documents

operates inside defined guardrails.

It cannot “creatively invent” outside its domain which, in ESG, is a feature.

Data Privacy & Confidentiality

ESG platforms handle:

Supplier data

Energy consumption records

Financial exposure

Internal sustainability strategies

Many enterprises hesitate to push sensitive data through large, external foundation models.

SLMs offer:

On-prem or private-cloud deployment

Reduced data exposure

Controlled training sets

Easier audit trails

For sustainability leaders, this matters as much as accuracy.

Explainability & Governance

Board-level ESG discussions increasingly ask:

How was this emission calculated?

Which source was used?

Can we reproduce the result?

Is the methodology aligned with GRI / CSRD?

Smaller, domain-trained models:

Use narrower data sources

Operate within structured ontologies

Provide clearer traceability

That makes governance easier.

And governance is the backbone of credible sustainability.

The Strategic Insight

Large language models are impressive.

But ESG is not a creativity problem.

It is a compliance, structure, and accountability problem.

The smartest AI strategy for ESG organizations is not:

“How big can the model be?”

It is:

“How aligned is the model with the domain?”

And alignment not size is what builds long-term trust.

The Economic Case: Cost, Efficiency & ROI of Small Language Models

AI strategy in ESG cannot just be about performance. It must also make financial sense.

Sustainability teams are already working with:

Tight budgets

Expanding compliance scope

Increasing audit demands

Limited technical bandwidth

For ESG organizations, AI must reduce cost and complexity not introduce more of it.

Infrastructure Costs: Bigger Models, Bigger Bills

Large Language Models (LLMs):

Require heavy GPU infrastructure

Consume significant inference compute

Demand constant fine-tuning

Often rely on expensive API calls

For ESG platforms processing:

Thousands of supplier submissions

Emission calculations

Reporting drafts

Compliance validations

Token costs add up quickly.

Small Language Models (SLMs):

Run on lighter infrastructure

Can be deployed on-premise

Require lower compute per query

Scale more predictably

For ESG use cases, where tasks are repetitive and structured, SLMs are far more cost-efficient.

Efficiency Gains in Structured Workflows

ESG work is not random conversation. It is structured workflow automation:

Scope 1, 2, 3 data classification

Emission factor matching

Reporting alignment with frameworks like Global Reporting Initiative

Disclosure formatting for Carbon Disclosure Project

Regulatory structuring under European Commission (CSRD)

These tasks benefit from:

Rule-based intelligence

Domain embeddings

Narrow contextual reasoning

An SLM fine-tuned on ESG-specific corpora:

Responds faster

Produces more consistent outputs

Reduces rework

Minimizes human correction cycles

That directly improves operational ROI.

Lower Risk = Lower Financial Exposure

Compliance errors have consequences:

Refiling costs

Consultant fees

Audit escalations

Potential penalties

A hallucinated data point in a sustainability report could:

Damage credibility

Trigger regulatory questions

Impact investor trust

Smaller, controlled models reduce this exposure.

And in risk-adjusted ROI calculations, reliability has economic value.

Time-to-Deployment Advantage

Training or integrating large foundation models can be complex.

SLMs:

Train faster

Fine-tune quicker

Deploy in shorter cycles

Integrate easily into ESG platforms

For organizations under pressure to meet new regulations especially under CSRD and evolving SEC climate disclosures speed matters.

Faster deployment = faster value realization.

Strategic Capital Allocation

ESG budgets should prioritize:

Data accuracy

Supplier onboarding

Measurement systems

Reduction initiatives

Not oversized AI experiments.

The smartest ESG organizations are not asking:

“How big is the model?”

They are asking:

“Does it improve reporting accuracy, reduce manual work, and protect compliance?”

And increasingly, the answer points toward small, domain-trained AI systems.

The Future of ESG AI: Domain Intelligence as Infrastructure

AI in ESG is moving past experimentation. The next phase is not about chatbots. It is about embedded intelligence.

The future of ESG AI is not bigger general models, it is domain-specific intelligence built directly into sustainability infrastructure.

From Tool to Infrastructure

In the early wave of AI adoption, organizations treated AI as an add-on:

A writing assistant

A data summarizer

A chatbot layer

But ESG platforms are evolving differently.

AI is becoming:

The engine that classifies supplier data

The system that flags compliance gaps

The layer that validates emission methodologies

The logic that aligns disclosures with frameworks

ESG Is a Structured Knowledge System

Sustainability is built on:

Emission factor databases

Regulatory texts

Sector-specific methodologies

Carbon accounting standards

Frameworks such as:

Global Reporting Initiative

Carbon Disclosure Project

European Commission (CSRD)

Securities and Exchange Commission climate disclosures

are structured systems.

The future belongs to AI that understands these structures natively.

That means:

Domain-trained embeddings

Regulatory-aware reasoning

Controlled output environments

Built-in audit traceability

Vertical AI Will Outperform Horizontal AI

Horizontal LLMs aim to know everything.

Vertical ESG AI aims to know one domain deeply.

In the coming years, we will likely see:

ESG-specific language models

Climate-risk-trained reasoning engines

Sector-specific carbon accounting systems

AI embedded inside supply-chain reporting tools

These systems will not try to answer every question.

They will answer ESG questions precisely.

Governance Will Drive Architecture

Regulators are increasing expectations around:

Transparency

Data lineage

Reproducibility

Internal controls

As ESG disclosures mature, AI systems will need:

Explainable logic

Traceable decision paths

Structured data inputs

Controlled outputs

Small, domain-constrained models are better aligned with this governance-heavy future.

The Strategic Shift

The organizations that win in ESG AI will not be the ones that adopt the biggest models.

They will be the ones that:

Embed intelligence into workflows

Align AI with regulatory architecture

Optimize for trust over novelty

Build sustainable digital infrastructure

Because ESG itself is long-term.

And the AI powering it must be designed the same way.

Final Conclusion: Why Smaller Is the Smarter Long-Term Strategy

Artificial Intelligence is transforming every enterprise function.

But ESG is not just another function.

It sits at the intersection of:

Regulation

Investor scrutiny

Operational data

Climate accountability

Long-term corporate strategy

And that changes everything.

In ESG, intelligence must be precise, explainable, and accountable, not just powerful.

The Core Misconception

The market often assumes:

Bigger model = better performance.

That assumption may hold in open-ended generative tasks.

It does not hold in ESG.

Sustainability reporting under frameworks like:

Global Reporting Initiative

Carbon Disclosure Project

European Commission (CSRD)

Securities and Exchange Commission climate rules

requires:

Structured reasoning

Controlled outputs

Audit trails

Methodological consistency

This is not a creativity challenge.

It is an accountability challenge.

Why Small Language Models Win in ESG

Small, domain-trained models:

Operate within defined ESG taxonomies

Reduce hallucination risk

Lower infrastructure and token costs

Enable on-premise deployment

Improve explainability

Align with governance requirements

Deliver faster, repeatable workflows

They are optimized for precision over possibility.

And in ESG, that trade-off is not a limitation, it is a strategic advantage.

The Strategic Perspective for Leadership

For CIOs, CSOs, and ESG Heads, the real question is not:

“Which AI model is the most impressive?”

It is:

“Which AI architecture strengthens compliance, protects credibility, and scales sustainably?”

The future of ESG technology will not be built on the biggest models available.

It will be built on:

Domain intelligence

Structured data systems

Embedded compliance logic

Responsible AI architecture

Smaller models are not a step backward.

They are a step toward maturity.

The Long-Term View

ESG is a long-term discipline.

It demands:

Consistency year over year

Transparent methodology

Reliable data flows

Strategic decision support

The AI systems powering ESG must reflect those same principles.

In the race for bigger models, it is easy to chase scale.

But in ESG, scale without structure creates risk.